Category: Culture

-

Evolving Our Tech Landscape – ottonova Tech Radar Q3 2025 Update

At ottonova, we continuously evaluate our technology stack to ensure we’re using the best tools to deliver exceptional digital health experiences. Our quarterly Tech Radar update reflects our commitment to technological excellence and innovation. Here’s a summary of the key changes coming via our Q3 2025 Tech Radar update. Infrastructure Evolution Backstage has gained prominence…

-

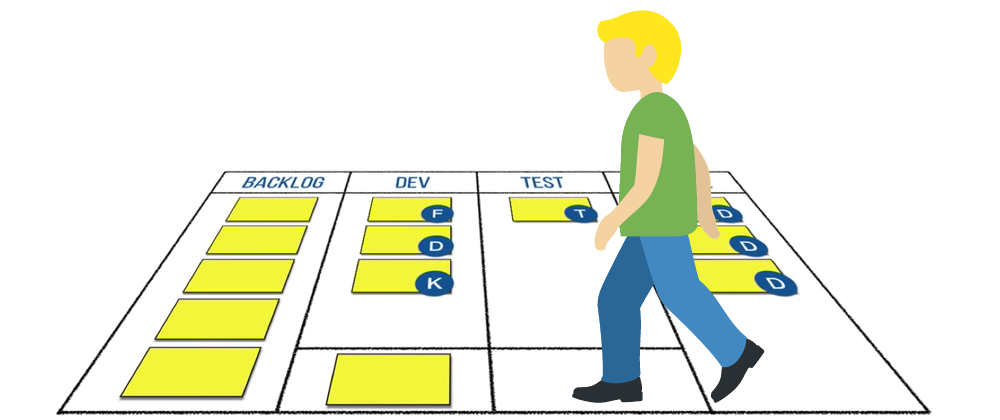

How We Transformed Our Daily Meetings for the Better

Transform your meetings with ‘Walking the Board’ inspired by ‘Development That Pays.’ Discover how we made stand-ups more collaborative, efficient and focused on team success. Explore actionable insights to revitalize your daily meetings and enhance teamwork.

-

Introducing the ottonova Tech Radar

We always promoted openness when it came to our tech stack. The ottonova Tech Radar is the next step in that direction. What is the Tech Radar? The ottonova Tech Radar is a list of technologies. It’s defined by an assessment outcome, called ring assignment and has four rings with the following definitions: ADOPT – Technologies we…

-

4 rules for building successful cross-functional teams

ottonova has a long history of using cross-functional teams for Software Engineering. Very early on we tried to establish them to become more productive and product centred. It took us a few tries to get it right and oh boy, did we make a lot of mistakes along the way. I would like to share…

-

Recruiting Backend Engineers at ottonova

Here are some words about how the Backend Team goes about finding new team members. We want to do our part and share with the Community, as well as provide a bit more transparency into ottonova and how we are building state-of-the-art software that powers Germany’s first digital health insurance. This article covers what we…

-

Continuing our Hackathon tradition

At ottonova, we have taken the challenge of bringing a slowly moving and mostly antiquated business, the one of health insurance in Germany, into the 21st century. For over three years already we’ve been offering our customers not only competitive insurance tariffs, but also the digital products that go along with them. All this to…

-

Bulgaria PHP Conference 2019

Let’s talk about organization, preparation and venue first. From my point of view, the organizers did a lot to make this conference great, at least they tried to do their best. The conference, same as the workshop took place in the very center of the city, in the biggest public hall. It was quite easy…